Getting Started with Azure Networking

Even though Kyndryl Cloud Uplift on Azure is running within an Azure data center, Azure treats Kyndryl Cloud Uplift as if it were a separate on-premises location, for network isolation purposes. To make networking more straightforward for our customers, Kyndryl Cloud Uplift and Microsoft Azure have partnered in the design of several common network patterns, which you can put to work in your own applications. Whether you need to connect your Kyndryl Cloud Uplift Environment to your on-premises applications, or your Azure Virtual Network (vNet), or both, there is a network topology to address your needs.

Table of Contents

- Kyndryl Cloud Uplift on Azure Network Connectivity Considerations

- TCP/IP Performance Tuning for Kyndryl Cloud Uplift on Azure IBM Power Logical Partitions (LPARs)

- Option 01: Direct VPN into Kyndryl Cloud Uplift

- Option 02: VPN into Azure Hub

- Option 03: Global Reach Enabled ExpressRoute

- Appendix

- Kyndryl Cloud Uplift UI ports

- Global Reach Enabled ExpressRoute Guide

- Connect your Kyndryl Cloud Uplift application just to On-Premises, using an existing ExpressRoute to On-Premises

- Connect your Kyndryl Cloud Uplift application just to an Azure Virtual Network (vNet) through an ExpressRoute

- Connect your Kyndryl Cloud Uplift application to both On-Premises and an Azure vNet

- Connect your Kyndryl Cloud Uplift application over an existing On-Premises ExpressRoute circuit, when the connection between Kyndryl Cloud Uplift and Azure is a VPN

- Connect your Kyndryl Cloud Uplift application to both On-Premises and an Azure vNet, when you need transitive routing

- Next steps

Kyndryl Cloud Uplift on Azure Network Connectivity Considerations

- Regions – Kyndryl Cloud Uplift Global Regions

Note Create a LOCK on the Azure resource group that contains the Kyndryl Cloud Uplift on Azure service. This lock may help prevent someone from accidentally deleting your Kyndryl Cloud Uplift on Azure subscription that resides in an Azure resource group.

- Satellite Centers Connectivity:

There are two methods to connect satellite centers into Kyndryl Cloud Uplift on Azure via VPN connection:

- Method 1: VPN between satellite facilities terminating directly into Kyndryl Cloud Uplift on Azure

- Method 2: VPN connection between satellite facilities and Azure VPN Gateways in your Azure hub leveraging an Azure Virtual WAN hub or an Azure Route Server

Best practice: To determine latency between your connectivity into Kyndryl Cloud Uplift on Azure (i.e., your DC, Distribution Warehouse), we recommend conducting a Kyndryl Cloud Uplift Speed Test.

TCP/IP Performance Tuning for Kyndryl Cloud Uplift on Azure IBM Power Logical Partitions (LPARs)

This article discusses common best practices for tuning IBM Power IBM i and AIX workloads for Kyndryl Cloud Uplift on Azure (Kyndryl Cloud Uplift on Azure). One of the biggest challenges organizations have when migrating and running Power workloads in Kyndryl Cloud Uplift on Azure is tuning the TCP/IP stack for both the local (within a Kyndryl Cloud Uplift on Azure) environment and externally either between on-premises and Kyndryl Cloud Uplift on Azure, or between Kyndryl Cloud Uplift on Azure deployments in different Kyndryl Cloud Uplift on Azure regions. This article makes several references to general Azure tuning techniques as well as those from IBM for AIX and IBM i.

Executive Summary

An efficient network is key to rapid cloud migration and ongoing application performance for any cloud deployment. There are several factors that impact network performance, two of those being latency and fragmentation.

To establish a baseline for network performance based on several tuning parameters for both AIX and IBM i, Kyndryl Cloud Uplift tested the following scenarios:

- Two LPARs on the same Power Hosting Node (PHN)

- Two LPARs within the same Kyndryl Cloud Uplift environment and subnet

- Two LPARs, one in Kyndryl Cloud Uplift on Azure’s Singapore region to a second in Kyndryl Cloud Uplift on Azure’s Hong Kong region using VPN to connect both regions

- Two LPARs, one in Kyndryl Cloud Uplift on Azure Singapore region to a second in Kyndryl Cloud Uplift on Azure’s Hong Kong region using Azure ExpressRoute provisioned at 1Gb/sec

Each of these scenarios were done for AIX and IBM i. For AIX, Kyndryl Cloud Uplift tested using SCP as a file transfer protocol in addition to IPERF3. For IBM i, Kyndryl Cloud Uplift used FTP only.

Kyndryl Cloud Uplift testing found:

- LPARs of both Operating Systems performed better with TCP Send Offload enabled.

- Network performance between the two systems was best when source and target machines matched for both MTU and Send/Receive buffer settings.

- A Maximum Transmission Unit (MTU) of over 1500 for WAN connections was problematic. Kyndryl Cloud Uplift recommends using an MTU between 1250 and 1330 for ExpressRoute and VPN connections.

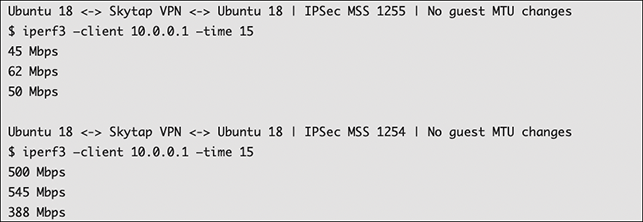

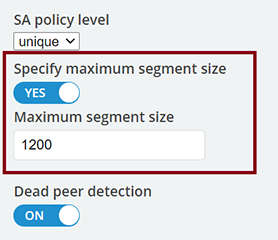

- When using a VPN, set an MSS Clamp no lower than 1200 and no higher than 1254.

- When transferring large amounts of data, look at using applications that can support multiple file transfer connections or transfer multiple files at once. This allows the client to maximize available bandwidth of a connection.

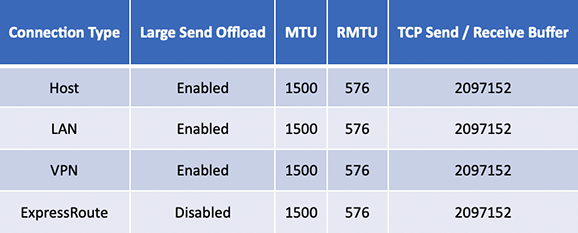

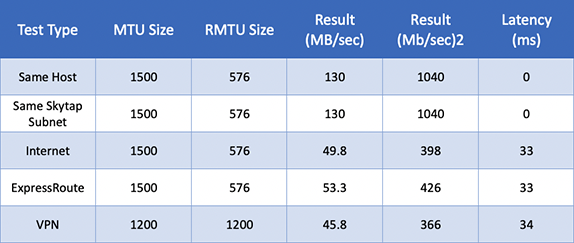

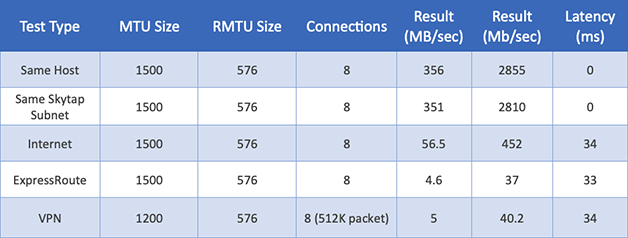

Table 1 – AIX Network Performance Recommendations for local traffic and between Kyndryl Cloud Uplift on Azure Singapore and Hong Kong region

Table 1 – AIX Network Performance Recommendations for local traffic and between Kyndryl Cloud Uplift on Azure Singapore and Hong Kong region

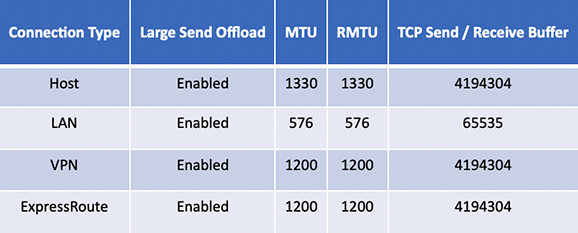

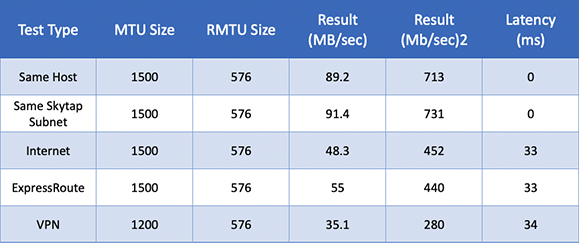

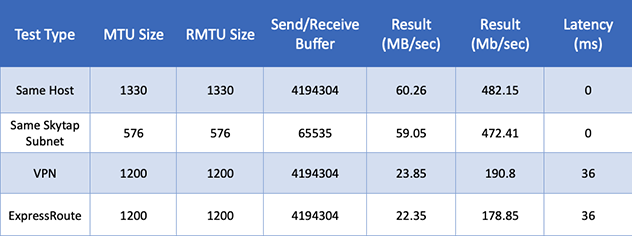

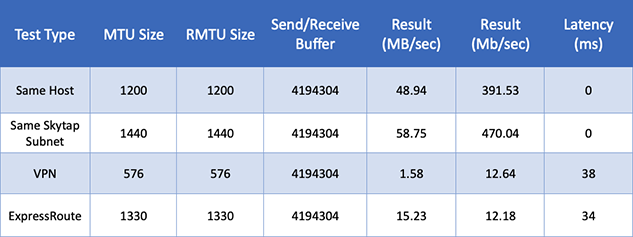

Table 2 – IBM i Network Performance Recommendations for local traffic and between Kyndryl Cloud Uplift on Azure Singapore and Hong Kong regions

Table 2 – IBM i Network Performance Recommendations for local traffic and between Kyndryl Cloud Uplift on Azure Singapore and Hong Kong regions

These settings were shown to have the best performance between LPARs of like Operating System in these scenarios but should only be considered a baseline. Performance will vary based on the configuration and settings within your Kyndryl Cloud Uplift environment.

The Role of MTU, Fragmentation, and Large Send Offload

The MTU is the largest size frame (packet) that can be sent over a network interface that is measured in bytes. The general default setting for most devices is 1500. However, for Power workloads this is commonly not the case as discussed later in this article.

Fragmentation

Fragmentation occurs when a packet is larger than the device, LPAR, and/or virtual machine (VM) is set to handle. When a larger packet is received, it is either fragmented into one or more packets and resent, or dropped altogether. If fragmented, the device on the receiving end then must reassemble the original packet so it can be processed by the end system.

Fragmentation generally has a negative impact on performance. When a packet must be fragmented, it requires more CPU on the device or receiving system. The device must hold all the fragmented packets, reassemble them, and then send it to the next device. If it has an MTU size that doesn’t match, this process repeats until the traffic finally reaches the target system. The impact is increased latency due to devices having to reassemble the packets and the potential for packets to be received out of order by the device. In some cases, they can be dropped causing the packet to have to be resent.

The Pros and Cons of Modifying the MTU

Generally, the larger the MTU, the more efficient your network and the faster you can move data between two systems. This is the first consideration as to network tuning with Power workloads as they often don’t default to the standard 1500 MTU. For AIX, it is common to see Jumbo Frames enabled which has an MTU of 9000. For IBM i, the default is at the opposite end of the spectrum at 576, which dates back to being optimized for dial up modems.

If the packet is set larger than devices in the path can handle, the packets will be fragmented, new header information will be added, and the packet is sent onto its destination. This all adds overhead. For low latency connections, it will prevent you from consuming maximum bandwidth. For WAN connections such as ExpressRoute and VPN over greater distances, it can have a much greater impact. This is especially apparent during the migration phase where you are working to move a lot of data in a short amount of time.

Conversely, if you set the MTU size too small, then the destination system must process more packets and that too hinders performance. The key is to find the right MTU that is optimized for the speed and latency of the connection you are using.

Kyndryl Cloud Uplift and LPAR MTU

Kyndryl Cloud Uplift Power Hosting Nodes (PHNs) use VIOS. VIOS is the virtualization layer provided by PowerVM which controls all virtual input and output from a given LPAR. It virtualizes the physical hardware. This exists both for network traffic to local and WAN connections, in addition to traffic to Kyndryl Cloud Uplift’s Software Defined Storage layer. Traffic that leaves the LPAR will be governed by VIOS and the Shared Ethernet Adapter (SEA) of the VIOS LPAR. Kyndryl Cloud Uplift sets the MTU of the SEA to 1500. As a result, setting your LPAR’s MTU higher than 1500 will result in fragmentation. However, this changes if you have multiple LPARs on the same network that are on the same subnet and PHN. In this situation, traffic never leaves the PHN and VIOS essentially “steps out of the way” allowing the LPAR virtual adapters to communicate directly with one another. The result is very fast network throughput and the ability to use Jumbo Frames.

Azure and Fragmentation

Azure’s Virtual Network stack will drop out of order fragments or fragmented packets that do not arrive in the order that they were supposed to. When this happens, the sender must resend those packets. Microsoft does this to protect itself against a vulnerability called FragmentSmack that was announced in November of 2018. This can have a substantial impact when you have high latency connections or connections where there is a lot of fragmentations because of an MTU that is set too high.

Kyndryl Cloud Uplift and Azure ExpressRoute

Kyndryl Cloud Uplift and Azure ExpressRoute use an MTU size of 1438. Sending traffic between two Kyndryl Cloud Uplift on Azure regions will result in an MSS of 1348. This includes 20 bytes of IP header and 20 bytes of TCP header, then Azure modifies this which adds another 50 bytes.

Kyndryl Cloud Uplift on Azure and VPN

Kyndryl Cloud Uplift on Azure’s virtual networking stack includes native support for IPSec. This allows users to create VPN tunnels on the Kyndryl Cloud Uplift on Azure side without having to add IPSec Gateways and/or virtual appliances. Like ExpressRoute, the MTU of IPSec connections should not be set higher than 1438 and an MSS of 1348.

Setting the correct MSS is critical in reducing fragmentation. To illustrate the point, here is an example of an IPERF test where Kyndryl Cloud Uplift set the MTU within the MSS of 1254 vs. setting it one byte above the MSS as 1255, resulting in fragmentation.

The above example was taken between the Hong Kong and Singapore regions. The differences are dramatic - approximately a 10x delta. The lesson learned is to avoid fragmentation at all costs as file transfer speed is greatly reduced as the latency between source and destination increases.

Large Send Offload

Large Send Offload (LSO) allows the LPAR in Kyndryl Cloud Uplift on Azure to send large packets and frames through the network. When enabled, the Operating System creates a large TCP packet and sends it to the Ethernet Adapter for segmentation before sending it through the network. For LPARs that are on the same PHN with LSO enabled, the result is extremely high network throughput. Kyndryl Cloud Uplift on Azure’s VIOS SEA has LSO enabled, so that using this setting can improve communication between LPARs on the same subnet within a Kyndryl Cloud Uplift Environment. However, when the traffic is destined to another LPAR in the network, it must go through the SEA with an MTU of 1500, which will result in fragmentation.

For AIX with LSO enabled, you will see maximum throughput between two AIX LPARs on the same subnet at ~2Gb/sec due to the MTU size of 1500. With two LPARs on the same PHN, VIOS and the physical network layer is bypassed allowing far greater speeds at a maximum of about 2.6Gb/sec.[1]

For IBM i with LSO enabled, performance is going to be very protocol dependent. Using FTP as a test, maximum throughput between two IBM i systems is ~472Mb/sec with an MTU of 576 with two IBM i systems on the same subnet running on different PHNs. When running on the same PHN, performance increases slightly at ~482Mb/sec with an MTU size of 1330. It is important to note that you can expect higher throughput with multiple connections. Therefore, when transferring a lot of data from an IBM i system, you should use multiple FTP streams. For example, if you have three 5GB files to transfer, utilize three separate interactive sessions to increase your overall throughput.

VPN and MTU

If you are using a VPN between Kyndryl Cloud Uplift Environments in different Kyndryl Cloud Uplift on Azure regions or between Kyndryl Cloud Uplift on Azure and an external environment, you need to account for the increased overhead for the VPN encapsulation.

For AIX, testing was done between two Kyndryl Cloud Uplift on Azure regions with ~34ms of latency using SCP between both systems. Kyndryl Cloud Uplift recommends setting the MTU to 1200 with an MSS of 1250 with LSO enabled. In this configuration, Kyndryl Cloud Uplift has seen transfer rates of ~366Mb/sec. When the physical network layer is bypassed, Kyndryl Cloud Uplift has seen far greater transfer speeds at a maximum of about 2.6Gb/sec.

For IBM i, testing was done between two Kyndryl Cloud Uplift on Azure regions with ~34ms of latency using FTP between both systems. Kyndryl Cloud Uplift recommends setting the MTU to 1200 with an MSS of 1250 with LSO enabled. In this configuration, Kyndryl Cloud Uplift has seen transfer rates of ~190Mb/sec.

Azure ExpressRoute and MTU

If you are using an ExpressRoute between Kyndryl Cloud Uplift Environments in different Kyndryl Cloud Uplift on Azure regions or between Kyndryl Cloud Uplift on Azure and an external environment, you need to account for two important factors:

- Azure’s virtual networking stack will drop out of order packets. This often increases as latency increases. As a result, fragmentation needs to be managed carefully for optimal network performance.

- ExpressRoute adds some IP overhead when used with Kyndryl Cloud Uplift on Azure. The amount added is 50 bytes per frame/segment.

For AIX, testing was done between two Kyndryl Cloud Uplift on Azure regions with ~34ms of latency using SCP between both systems. Kyndryl Cloud Uplift recommends setting the MTU to 1200 with an MSS of 1250 with LSO enabled. In this configuration, Kyndryl Cloud Uplift has seen transfer rates of ~366Mb/sec, and when the physical network layer is bypassed, it allows far greater speeds at a maximum of about 2.6Gb/sec.

For IBM i, testing was done between two Kyndryl Cloud Uplift on Azure regions with ~34ms of latency using FTP between both systems. Kyndryl Cloud Uplift recommends setting the MTU to 1200 with an MSS of 1250 with LSO enabled. In this configuration, Kyndryl Cloud Uplift has seen transfer rates of ~190Mb/sec per any given connection.

Latency and Round-Trip Time

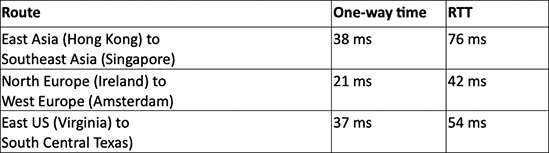

Network latency is governed by the speed of light. As a result, the maximum network throughput for TCP/IP is going to be dependent on the round-trip time (RTT) between source and target devices. This table shows the distance between Kyndryl Cloud Uplift on Azure regions.

Table 3 – Example Latency and route-trip time

Table 3 – Example Latency and route-trip time

Power OS Tunning Summary

Every deployment of AIX and IBM i that is on-premises and/or in Kyndryl Cloud Uplift on Azure will have different variables from what was tested and described in this article. Kyndryl Cloud Uplift tests were conducted in a relatively controlled environment between two Kyndryl Cloud Uplift on Azure regions with a known network path and devices. When communicating with systems in Kyndryl Cloud Uplift on Azure regions to those located on-premises and other cloud providers, different devices and their configurations will have a significant impact on network performance. This needs to be accounted for early in your network design and solutions. It is always best to tune and optimize from a position of knowing vs. not knowing. As a result, Kyndryl Cloud Uplift has provided a summary of the testing done and the tuning parameters that were used for each. A list of the parameters is provided below with a link to the appropriate documentation for you to learn more, and/or make the change to your system.

AIX Network Tuning[2]

For AIX network tuning, IBM’s guide for AIX Network Adapter Performance is a great reference. Key tuning parameters that were used to tune Kyndryl Cloud Uplift performance include:

- LSO AIX/IBM i – The TCP LSO option allows the AIX TCP layer to build a TCP message up to 64 KB long. The adapter sends the message in one call down the stack through IP and the Ethernet device driver. LSO is enabled by default on Kyndryl Cloud Uplift on Azure’s PHN SEAs (Shared Ethernet Adapter of VIOS).

- Maximum Transmission Unit (MTU) AIX/IBM i – The maximum network packet size that can be transmitted or received by a network device.

- Remote Maximum Transmission Unit (RMTU) – The MTU size that is used for remote networks. AIX attempts to calculate which networks are remote by looking at the route table. IBM i allows you to set the MTU for each route.

- Default TCP/IP Send Receive Buffer Size AIX/IBM i – The tcp_recvspace option specifies how many bytes of data the receiving system can buffer in the kernel for receiving traffic, likewise the tcp_sendspace tunable specifies how much data the sending application can buffer when sending data. For these tests, Kyndryl Cloud Uplift found that the default AIX Send/Receive Buffer size of 2097152 bytes yielded the best performance. For IBM i, there was some variation of values that performed well.

- Maximum Segment Size (MSS) – The largest amount of data, measured in bytes, that a computer or communications device can handle in a single, unfragmented piece. For VPN testing between regions, an MSS of 1200 was used on both sides of the VPN tunnel.

- Latency – Inside of a Kyndryl Cloud Uplift Environment yields near zero network latency. All remote tests were done between Hong Kong (CN-HongKong-M-1) and Singapore (SG-Singapore-M-1). Latency between these regions was between 33ms and 38ms.

Table 4 – AIX Network Performance with LSO Enabled using SCP

Table 4 – AIX Network Performance with LSO Enabled using SCP

Table 5 – AIX Network Performance with LSO Disabled using SCP

Table 5 – AIX Network Performance with LSO Disabled using SCP

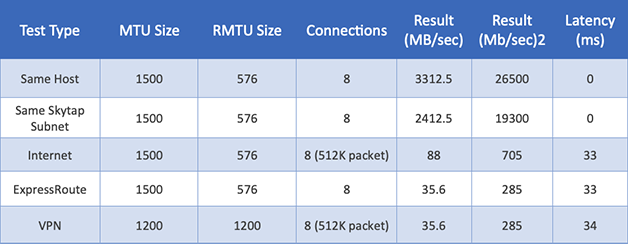

Table 6 – AIX Network Performance with LSO Enabled using IPERF8 with eight connections

Table 6 – AIX Network Performance with LSO Enabled using IPERF8 with eight connections

Table 7 – AIX Network Performance with LSO Disabled using IPERF8 with eight connections

Table 7 – AIX Network Performance with LSO Disabled using IPERF8 with eight connections

Table 8 – IBM i Network Performance with LSO Enabled using FTP

Table 8 – IBM i Network Performance with LSO Enabled using FTP

Table 9 – IBM i Network Performance with LSO Disabled using FTP

Table 9 – IBM i Network Performance with LSO Disabled using FTP

Summary

To maximize the network performance between systems in Kyndryl Cloud Uplift on Azure, one must consider latency and fragmentation and work to reduce both variables. This is not only true when looking at source and destination systems within Kyndryl Cloud Uplift on Azure regions, but also when moving data in and out of Kyndryl Cloud Uplift on Azure externally. In Kyndryl Cloud Uplift’s testing, it found these points critical to ensuring maximum network performance:

- TCP Send Offload should be enabled for both AIX and IBM i workloads.

- Make sure that source and target machines match both MTU and Send/Receive buffer settings.

- Do not use an MTU larger than 1500. Kyndryl Cloud Uplift recommends using an MTU between 1250 and 1330 for ExpressRoute and VPN connections.

- When using a VPN, look at setting an MSS Clamp no lower than 1200 and no higher than 1254.

- When transferring large amounts of data, look at using applications that can support multiple file transfer connections or transfer multiple files at once to maximize available bandwidth.

- It is important to remember each system and network can be different, so this needs to be accounted for in your tuning efforts.

1 Tests were done using IPERF3 set to use up to eight connections.

2 One of the best “unofficial” resources on AIX Tuning, Network Tuning in AIX by Jaqui Lynch it is a must read for anyone working on AIX Networking.

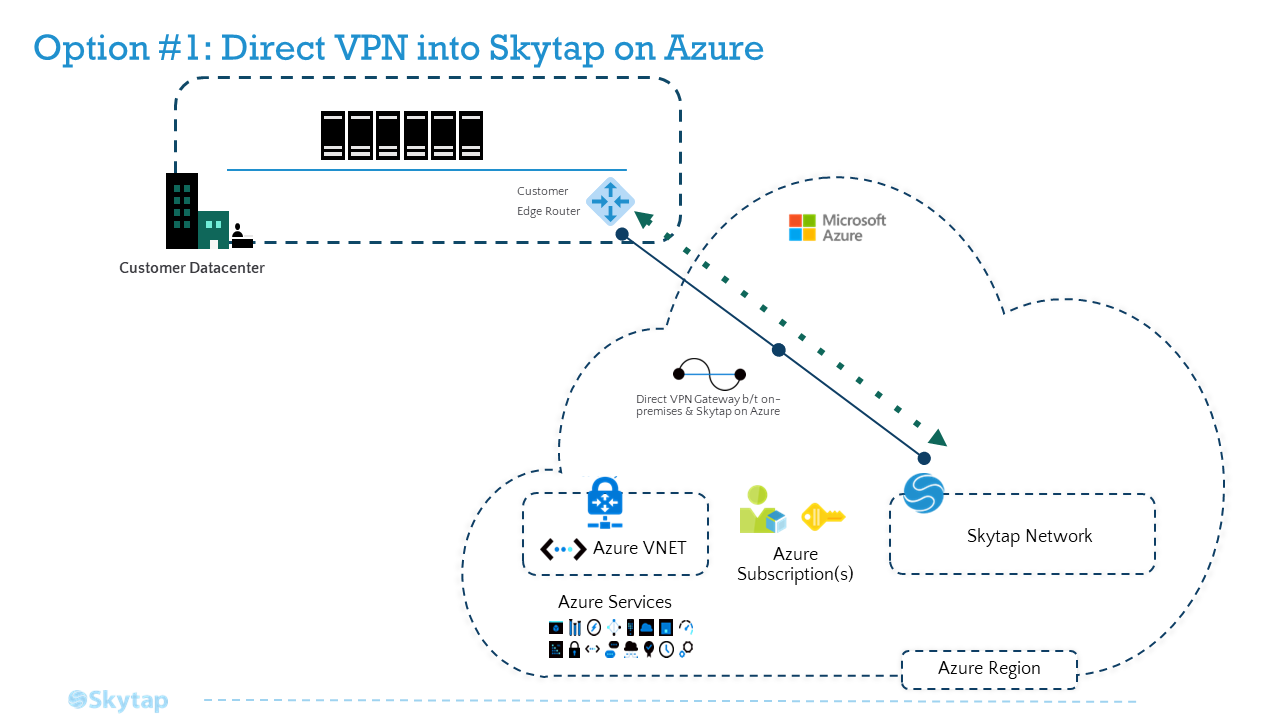

Option 1: Direct VPN Connection into Kyndryl Cloud Uplift

For context, this methodology will leverage a direct VPN into Kyndryl Cloud Uplift on Azure (bypassing the Azure hub network). While the Global Reach enabled ExpressRoute (option 3 below) is the most robust option, consider this direct VPN approach when the satellite facilities do not require access to additional Azure resources.

Keep in mind that this option may have the highest latency; however, the latency may be within the margin of successful operations. As is the case with all options, Kyndryl Cloud Uplift recommends conducting a Kyndryl Cloud Uplift Speed Test as mentioned above.

Links to helpful resources:

*Kyndryl Cloud Uplift VPN documentation on how to make VPN connections into Kyndryl Cloud Uplift on Azure:

Reference Architecture - Direct VPN

Setting up a Site-to-Site VPN between On-Premises and Kyndryl Cloud Uplift on Azure

Kyndryl Cloud Uplift built-in VPN service gives you a streamlined option to establish a VPN tunnel between your environment in Kyndryl Cloud Uplift, and your on-premises deployment. The tunnel is encrypted and encapsulated, routed over the public internet.

A VPN is like a bridge: both sides must be “facing each other” for traffic to flow. This means that each VPN endpoint must be configured the same way, with the same parameters. Before you begin, speak to your company’s IT department to find out which VPN parameters you’ll need to use for Kyndryl Cloud Uplift's side of the VPN.

Also like a bridge, VPNs are most reliable when distance being spanned is short. This means the two endpoints should be as close together as possible. You’ll want to set up the Kyndryl Cloud Uplift VPN endpoint in a region which is nearest to your corporate VPN endpoint. Choose the region in your Kyndryl Cloud Uplift account which is nearest to your corporate endpoint, and create the static public IP addressfor your new VPN.

Now you’re ready to create your VPN. Using the parameters you’ve agreed upon with your IT department, create the VPN endpoint in Kyndryl Cloud Uplift, and work with your company's IT department to set up a matching-configuration endpoint on your On-Premises VPN device. Once both endpoints are set up, make sure that you specify at least one remote subnet on the Kyndryl Cloud Uplift side (this is the on-prem network subnet that Kyndryl Cloud Uplift will be sending to and receiving from), and then be sure to test your VPN to confirm that it will properly establish a tunnel.

Once you’ve confirmed that your VPN can successfully establish Phase1 and Phase2 connectivity, Connecting environments to a WAN, choose a server from each side of the WAN topology that need to talk to each other (one from Kyndryl Cloud Uplift and one from your on-premises network), and test the final end-to-end connectivity between each server.

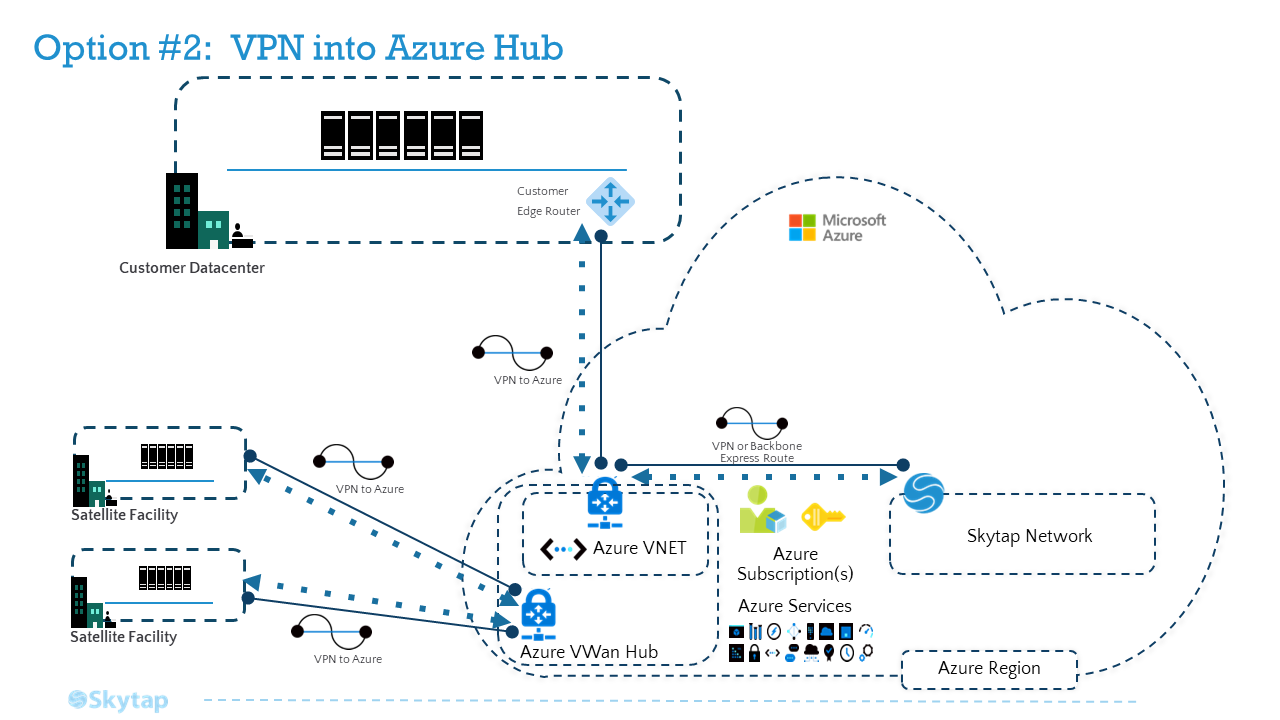

Option 2 – VPN into Azure WAN Hub

For context, the VPN into Azure Hub option may provide a lower latency connection over Option 1 (direct VPN into Kyndryl Cloud Uplift) and perhaps even Option 3 due to the specific routing of your current ExpressRoute.

In this case, you will establish VPN connections between satellite facilities and the closest Azure VPN point-of-presence (POP) which leverages the Azure backbone to hit your Azure hub region. Once there, you will either leverage an Azure VWAN HUB or Azure Route Server.

Links to helpful resources:

-

Specific Azure doc relevant to this option: About Azure Route Server supports for ExpressRoute and Azure VPN

-

VWAN Hub info https://docs.microsoft.com/en-us/azure/virtual-wan/virtual-wan-about

Reference Architecture

Setting up a Site-to-Site VPN between Kyndryl Cloud Uplift and your Azure vNet Gatewa

Site-to-Site VPNs that connect into Azure virtual network (vNet) Gateways are just like site-to-site VPNs that connect to on-premises deployment: they send traffic that is both encrypted and encapsulated. But there’s also something a little different: When Kyndryl Cloud Uplift VPNs use Azure’s own internet routing, this means that traffic sent from Kyndryl Cloud Uplift to an Azure vNet via VPN will generally remain on the Azure backbone. This makes VPNs a relatively simple, lower cost, and behaviorally consistent option, especially for proofs-of-concept and Dev/Test applications.

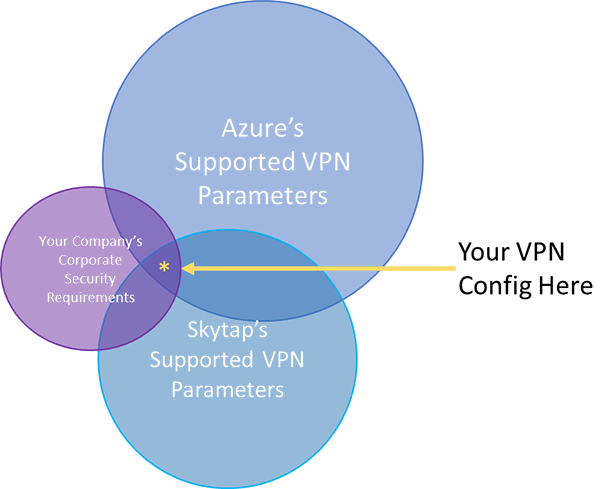

Setting up a Site-to-Site VPN between Kyndryl Cloud Uplift and Azure begins by sorting out which VPN parameters you’ll need to use. When connecting a Kyndryl Cloud Uplift VPN to an Azure vNet, the parameters you’ll configure on Kyndryl Cloud Uplift will be determined by the intersection of your IT department’s requirements, Kyndryl Cloud Uplift-supported parameter set, and Azure’s supported parameter set.

It’s worth noting that Kyndryl Cloud Uplift no longer supports Azure’s default IKE Phase 1 DH (Diffie-Hellman) Group—Group 2 (1024 bit)—due to its weak security. You’ll need to manually configure the parameters for your Azure VPN Gateway, rather than accepting the default configuration provided by Azure.

Due to Maximum Transmission Unit (MTU) limits between Azure and Kyndryl Cloud Uplift, you’ll also need to ensure that you clamp the Kyndryl Cloud Uplift-side VPN MSS to 1200. (Despite its smaller packet size, Kyndryl Cloud Uplift has found that clamping your maximum segment size (MSS) to 1200, rather than Azure’s standard-recommended 1350, ensures the best performance, and least amount of packet loss when the Kyndryl Cloud Uplift-side of the VPN is sending data.) It will ensure that your packets flow freely between Kyndryl Cloud Uplift and Azure, rather than being dropped or bounced, which can cause your VPN traffic to be slow, or even come to a standstill. In the Kyndryl Cloud Uplift portal, the setting to clamp your VPN’s MSS is at the bottom of the Edit WAN page:

Since VPNs are most reliable when distance being spanned is short, the two endpoints should be as close together as possible. You’ll want to set up the Kyndryl Cloud Uplift VPN endpoint in a region which is nearest to your existing Azure services. Choose the region in your Kyndryl Cloud Uplift account which is nearest to your existing Azure vNet, and create the static public IP address for your new VPN.

Now you’re ready to create your VPN. Using the parameters you’ve agreed upon with your IT department, create the VPN endpoint in Kyndryl Cloud Uplift. Then configure the VPN Gateway to your Azure virtual network. Once both endpoints are set up, make sure that you specify at least one remote subnet on the Kyndryl Cloud Uplift side (this is the on-prem network subnet that Kyndryl Cloud Uplift will be sending to and receiving from), and then be sure to test your VPN to confirm that it will properly establish a tunnel.

Once you’ve confirmed that your VPN can successfully establish Phase1 and Phase2 connectivity, connect your VPN to a Kyndryl Cloud Uplift Environment, choose a server from each side of the WAN topology that needs to talk to each other (one from Kyndryl Cloud Uplift and one from your on-premises network), and test the final end-to-end connectivity between each server.

Back to the Top

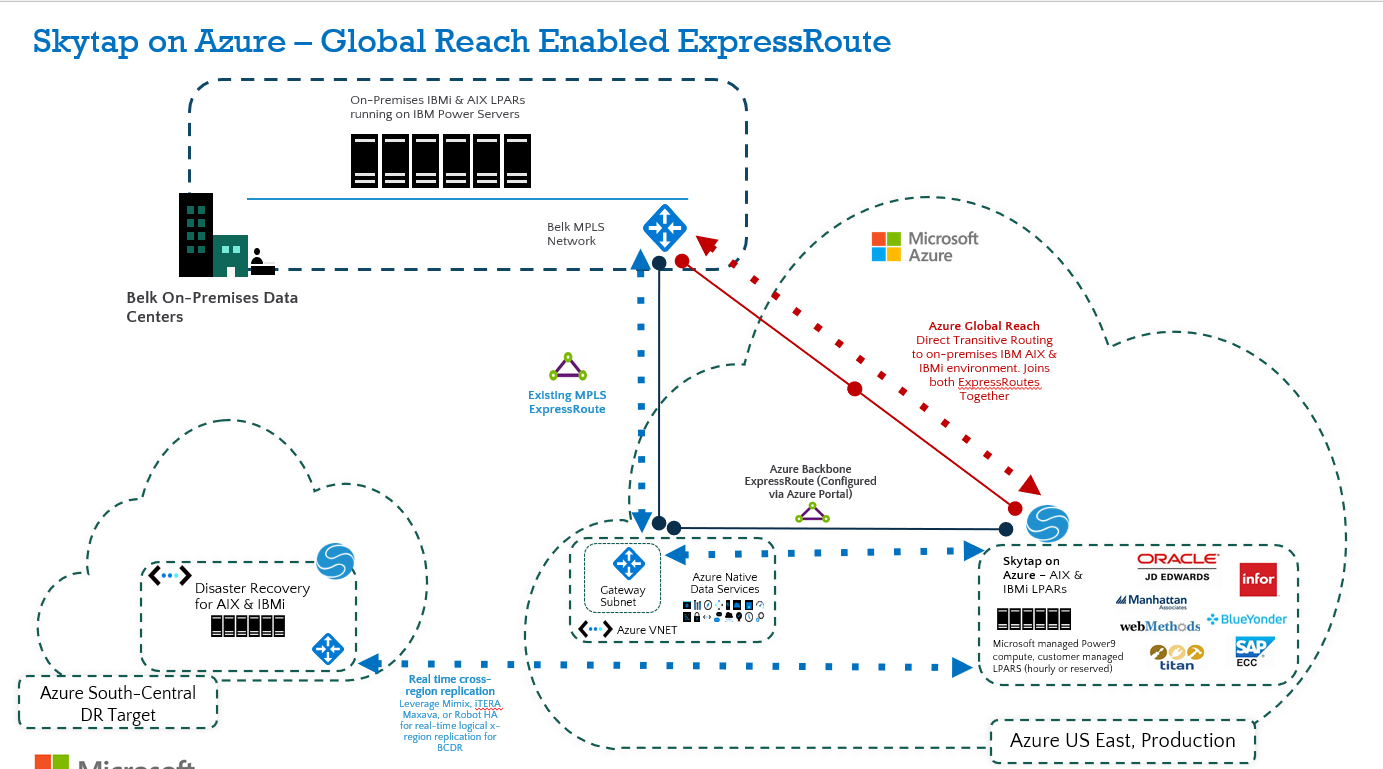

Option 3: Global Reach Enabled ExpressRoute

This is the recommended robust connection path for performance-sensitive workloads connecting directly between Kyndryl Cloud Uplift and on-premises. Keep in mind, you will need to consider your existing ExpressRoute configuration and latency between the endpoints.

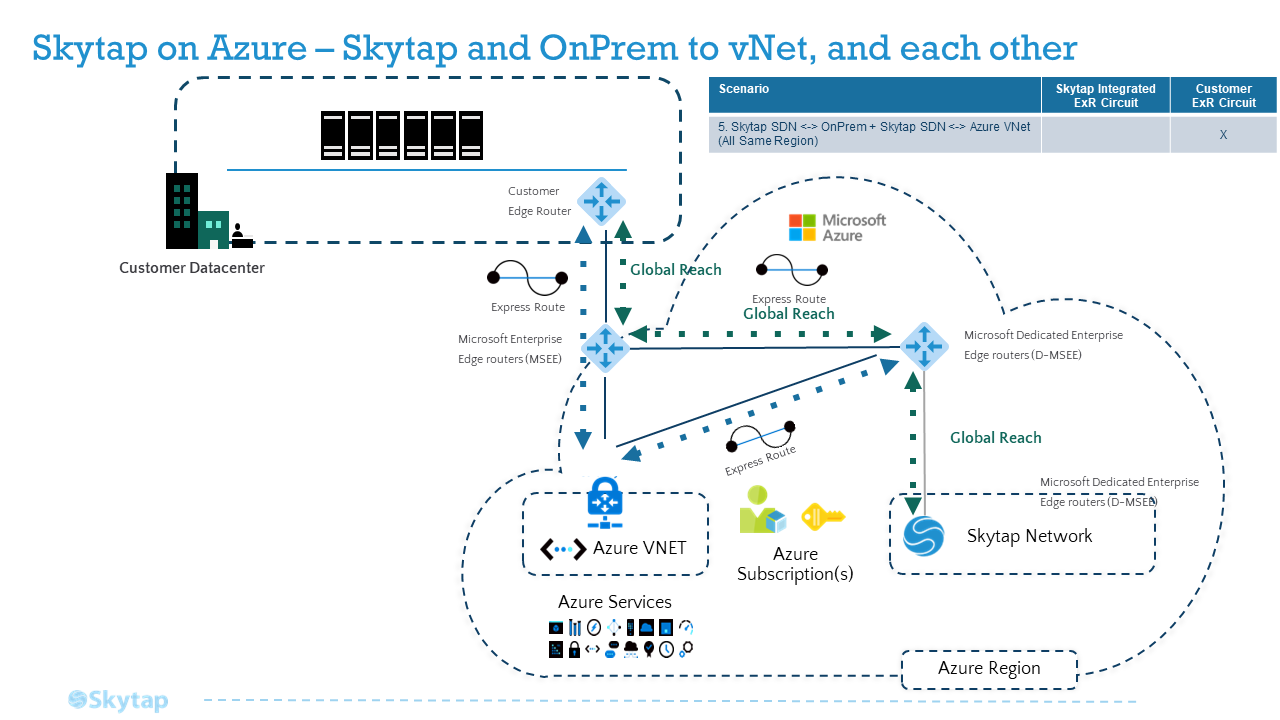

This approach will give you transitive routing between on-premises and Kyndryl Cloud Uplift on Azure. To set this up, create a new Azure Backbone ExpressRoute connection (see architecture below) between the Kyndryl Cloud Uplift on Azure service in Azure US East and your Azure hub. Note that the backbone ExpressRoute is not a new MPLS ExR circuit; rather, it’s a native Azure ExR configured in the Portal that does not require you to engage a Telco provider. Once configured in the Azure Portal, Microsoft will create this connection on the backend (may take 20-30 mins. to propagate). Once the Backbone ExpressRoute is established between Kyndryl Cloud Uplift and your Azure hub, you will enable Global Reach to allow transitive routing between both ExpressRoutes.

Setting up Global Reach Enabled ExpressRoute to Kyndryl Cloud Uplift

Pre-requisites – Global Reach Enabled ExpressRoute

-

Azure administrative rights to create the Azure resources:

- “Back Bone” ExpressRoute. This is not a Telco ExpressRoute (Step 1)

- Global Reach (Step 3)

-

/29 IP address space – for Global Reach (Step 3 below)

-

Any specific application ports that need to traverse your on-premises firewall.

- The ExpressRoute connection into the Kyndryl Cloud Uplift service is not firewalled, so all ports are open; however, it is a private connection in your network.

- The Kyndryl Cloud Uplift UI does have standard ports it uses for access as detailed in the Appendix. Note: Some public IP addresses are dependent on the region deployed. The IP’s listed in the Appendix are based on the intended region for deployment.

Recommended Path

The recommended path to connect Kyndryl Cloud Uplift on Azure to on-premises and Azure resources is a customer-managed ExpressRoute from Kyndryl Cloud Uplift that has Global Reach enabled to allow network transit from Kyndryl Cloud Uplift to on-prem.

-

Step 1: Create the Azure circuit that your Kyndryl Cloud Uplift Customer-Managed ExpressRoute will go through

-

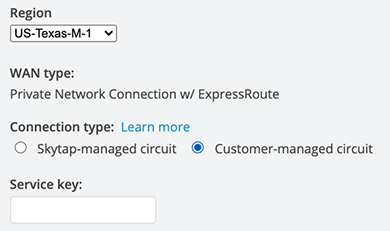

Step 2: Create the WAN connection in Kyndryl Cloud Uplift on Azure

- Creating and editing a Private Network Connection with ExpressRoute for your Kyndryl Cloud Uplift account

- Be sure to select “Customer-managed circuit” when creating your Kyndryl Cloud Uplift WAN per the instructions above

-

- Enter the service key from Step 1, where you created the ExpressRoute circuit in Azure

- On the WAN Details page for your new circuit, enter the remote subnet of the on-premises network which your existing on-premises ER circuit connects to

- Wait to enable the WAN in Kyndryl Cloud Uplift until the “pending” notification is no longer there and you have completed the Global Reach steps below

-

Step 3: Peer your new ExpressRoute circuit with your existing on-premises circuit using Global Reach

- Azure ExpressRoute: Configure Global Reach using the Azure portal

- Please note that Step 3 in the Global Reach setup requires a /29 IP address space from your network team that does not overlap with any other on-prem or Azure networks you have deployed.

- Be sure to notify your security / firewall team of the application ports that need to traverse the connection from Kyndryl Cloud Uplift on Azure to on-prem and back

-

Step 4: Enable the WAN in Kyndryl Cloud Uplift

- Review and follow Step 16 in the Creating and editing a Private Network Connection with ExpressRoute for your Kyndryl Cloud Uplift account Guide.

-

Step 5: Attach the WAN to your Kyndryl Cloud Uplift Environment.

- Connecting an environment network to a VPN or Private Network Connection”.

- Please note, there must be at least one running VM and/or LPAR in the environment and on the network for the connection to start

-

Step 6: Test your connection from the VM or LPAR in Kyndryl Cloud Uplift to an on-prem system within the remote networks set on the WAN during Step 2

- A simple ping test to be sure the connection flows all the way through

Reference Architecture - Global Reach Enabled ExpressRoute

Appendix

Kyndryl Cloud Uplift UI ports:

ExpressRoute and Global Reach

While VPNs are a solid and commonplace solution to traffic isolation and encapsulation, they do suffer from the “vagaries” of the public internet: At any time, new routing hubs can come online, BGP paths can change, and variable internet traffic congestion can impact or even degrade your application’s throughput. For performance-sensitive workloads, Azure and Kyndryl Cloud Uplift recommend Azure ExpressRoute, a private network connection service which can be configured between your on-premises site and Azure, Kyndryl Cloud Uplift and Azure, or both.

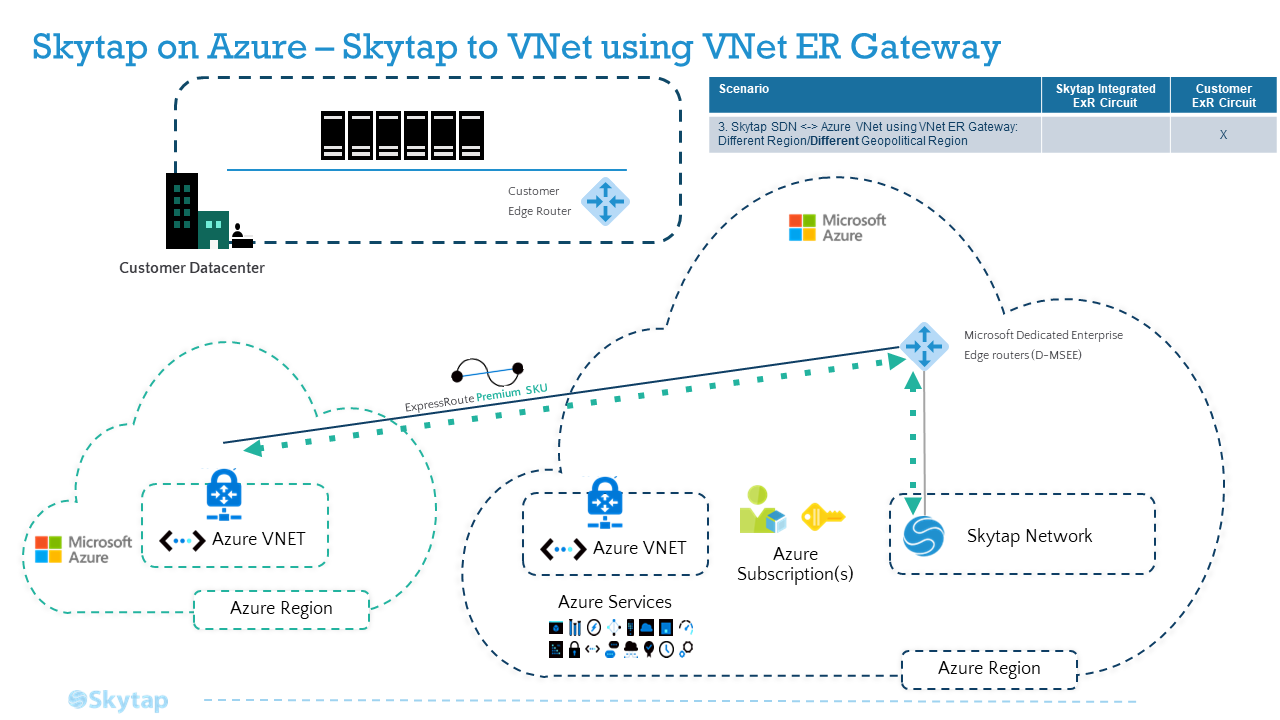

Creating ExpressRoutes with Kyndryl Cloud Uplift is a fairly simple process. ExpressRoutes created by Kyndryl Cloud Uplift are all configured as 1Gbps, using the Standard SKU. Alternatively, you can create an ExpressRoute in Azure using the set of parameters Kyndryl Cloud Uplift supports, and connect it to Kyndryl Cloud Uplift. This is ideal when you want to create an ExpressRoute with a Premium SKU, or with Global Reach, which aren’t supported through ERs created by Kyndryl Cloud Uplift.

What kind of ExpressRoute circuit(s) you’ll need, and how they’ll be connected to other parts of your WAN, will depend on where your traffic needs to go. In each of the following topologies, whether you need a ‘Standard’ or ‘Premium’ ExpressRoute depends on whether your source and target locations are in the same geopolitical region.

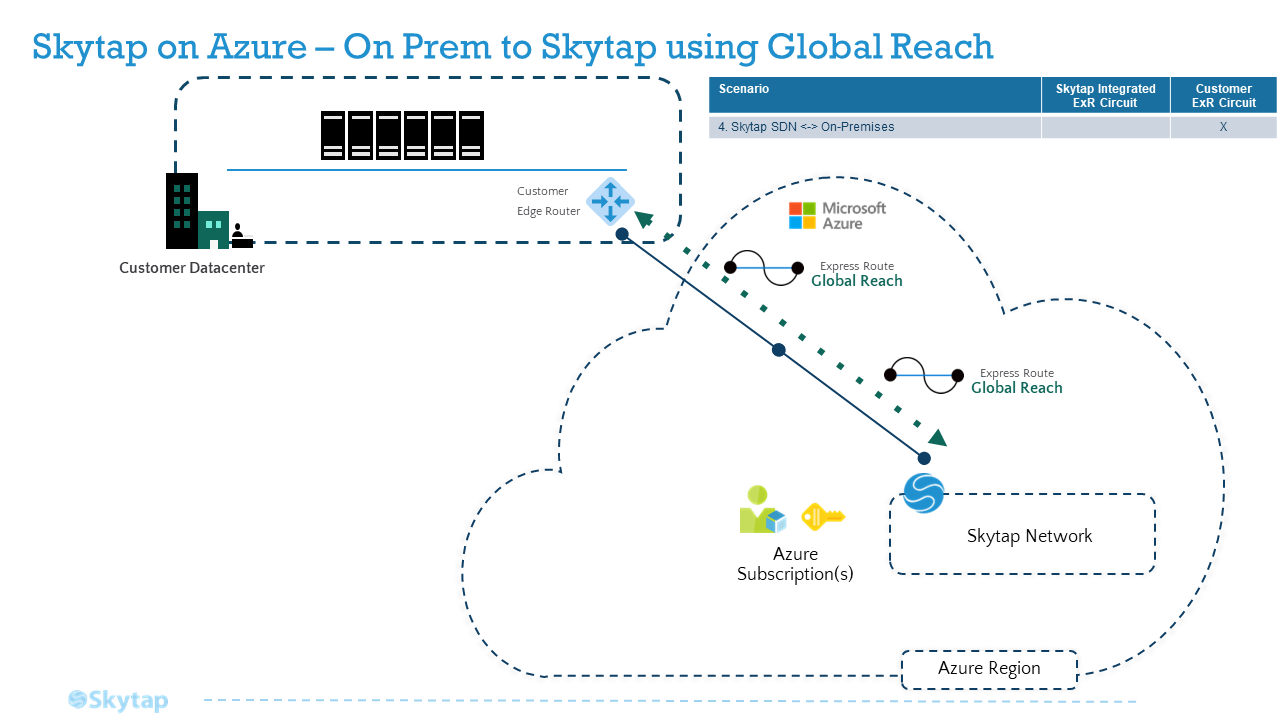

To connect your Kyndryl Cloud Uplift application just to On-Premises, using an existing ExpressRoute to On-Premises

To connect your application in Kyndryl Cloud Uplift to On-Premises, when you already have an ExpressRoute between Kyndryl Cloud Uplift and Azure, follow: Option #3: Global Reach Enabled ExpressRoute

To connect your Kyndryl Cloud Uplift application just to an Azure Virtual Network (vNet) through an ExpressRoute

Walk through the Kyndryl Cloud Uplift help documentation to follow the three major steps of connecting your Kyndryl Cloud Uplift application to your vNet: Create the ER; Configure a vNet Gateway; and Connect the circuit. An even more detailed walkthrough can be found here:

Reference Architecture - Azure Virtual Network (vNet) through an ExpressRoute

To connect your Kyndryl Cloud Uplift application to both On-Premises and an Azure vNet

The two network topologies described above can also happily co-exist: you can connect the same ExpressRoute circuit from Kyndryl Cloud Uplift in the first case to the vNet Gateway in the second case.

There’s one important caveat for this topology: any traffic sent through the vNet Gateway won’t transit to On-Prem, even if the On-Prem ExpressRoute circuit is also connected to that same vNet. So if, for example, you need your application traffic to flow to services on Azure, such as a firewall, an Azure VM, or other SaaS offering, before it moves out to on-premises, you’ll need transitive routing. To set up transitive routing, follow the steps in Example 5.

To connect your Kyndryl Cloud Uplift application over an existing On-Premises ExpressRoute circuit, when the connection between Kyndryl Cloud Uplift and Azure is a VPN

VPNs between Kyndryl Cloud Uplift and Azure are a cost-efficient, low-friction way to allow traffic to transmit encrypted, encapsulated, and generally all within Azure’s own backbone. If you want to connect a VPN from Kyndryl Cloud Uplift to an existing ExpressRoute circuit to your On-Premises site, according to Azure documentation, this topology requires an Azure Route Server. Microsoft’s new Azure Route Server service allows network virtual appliances (NVAs) such as firewalls, ExpressRoute circuits, and VPNs, to connect to one another, as a high-availability managed service.

Once you’ve created a VPN from Kyndryl Cloud Uplift to Azure, follow the Azure instructions to create a Route Server on the vNet, and configure route exchange to your Kyndryl Cloud Uplift VPN and your OnPrem ExpressRoute.

To connect your Kyndryl Cloud Uplift application to both On-Premises and an Azure vNet, when you need transitive routing

A common need among your applications is to send traffic out from OnPrem, into an Azure vNet with one or more preexisting Azure SaaS solutions, and then onward to a Kyndryl Cloud Uplift Environment. Traffic also frequently needs to flow the other way as well.

If the SaaS objects in Azure are VMs and NVAs (such as firewalls) on a particular vNet, Azure Route Server is a low-friction solution, as shown in Example 4.

If the topology you’re trying to mesh is relatively simple, you can set up the configuration yourself by establishing the proper peering relationships and route tables in Azure, bearing in mind that you must be careful to avoid the pitfalls around asymmetric routing.

However, if you need to connect several (or complex topologies of) vNets, you can't get the necessary ASNs, your NVAs don’t support BGP, or Route Server won’t work for you for any other reason, an Azure Virtual WAN (vWAN) is a full-service solution for configuring transitive connectivity between Kyndryl Cloud Uplift, Azure, and OnPrem. The vWAN is a global transit network, with regional hubs. You can use it to establish transitive routing between multiple ExpressRoutes (such as an On-Prem ER, through Azure, to a Kyndryl Cloud Uplift ER), or between an ExpressRoute and a VPN (such as an OnPrem ER, through Azure, to a Kyndryl Cloud Uplift VPN), and through any meshed topology of regional vNets. To allow traffic to transit two ExpressRoutes that are both connected to your vWAN mesh, you’ll need to peer the ER circuits with Global Reach, as in Example 1.

Reference Architecture - Azure Virtual Network (vNet) to both On-Premises and an Azure vNet through an ExpressRoute with Global Reach